Windows 2025 is now available on dedicated servers at XLHost

Microsoft has released Windows Server 2025 and XLHost is pleased to offer Windows Server 2025 on XLHost dedicated servers. Some of the highlights of Windows 2025 are:

Posts about:

Microsoft has released Windows Server 2025 and XLHost is pleased to offer Windows Server 2025 on XLHost dedicated servers. Some of the highlights of Windows 2025 are:

RAID (Redundant Array of Independent Disks) is a data storage technology that combines multiple physical disk drives into one or more logical units to improve performance, redundancy, or both. Here are some key RAID levels:

PCIe NVMe SSDs have been available for enterprise/datacenter deployments for several years. With the performance of the typical NVMe SSD being ten to fifteen times greater than the average SATA SSD it has been very tempting to deploy these drives for applications that require a lot of IOPs, storage throughput, or both. Up until now using NVMe drives in servers has come with compromises related to resiliency and overall performance. While the drives themselves have redundant NAND flash memory, the drives could still fail in a number of ways which could impact the availability of applications.

Hi again, this article is part two in the series which began with Choosing the right dedicated server platform. Here we will discuss various storage related issues from hard drives to RAID levels and more. The goal at XLHost is to help you choose dedicated servers that will provide you with the best price to performance ratio for your application(s).

It is a beautiful spring day at the XLHost campus in Columbus, OH so I will admit that I was looking for an excuse to get outside and enjoy it. One of the big questions we get from customers is what really goes into hosting dedicated servers? In this series of articles we will try to help you understand how XLHost takes a lowly pile of servers and turns it into a dedicated server service with a brief look (inside and out) at the XLHost datacenter in Columbus, OH.

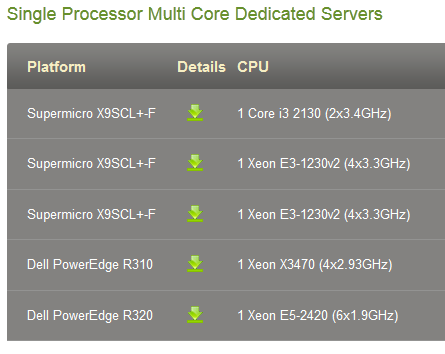

If you have already decided that you are going to host your application on a dedicated server, the next question you will want to answer is which dedicated server is right for you. In this article we will try to make it easier to understand some of the differences between the various server platforms XLHost uses. This article is the first in a multi-part series where we will cover all aspects of dedicated server hardware.

Thanks to the bombardment of spam that is levied towards business and consumer inboxes it is becoming increasingly difficult for legitimate marketing and transactional email messages to reach the hallowed inbox. XLHost gets questions frequently regarding email delivery and I want to share with you some best practices I have learned and free tools that you can use to ensure that the email you send from your dedicated servers gets delivered to the inbox.

SSDs have been generally available for mainstream enterprise and consumer use since about 2008. As the average price per GB of SSD rapidly declines and the performance, reliability, and endurance continues to increase; many enterprises are turning to SSD storage as a way of delivering the data their applications need to achieve outstanding performance.

First, let me welcome you to the new XLHost blog and introduce myself. I am Drew Weaver, Chief Technical Officer at XLHost. If you're a customer of XLHost we have most likely interacted at some point in the past. I have been with XLHost since 1999 and it is basically my job to oversee the operation of anything that has a blinking light and make sure that our amazing technical support staff continues to be the best in the industry. My posts will likely tend to skew towards technical matters so bare with me if my geekness shines through. (I will try to keep it in check)